As part of my (almost) daily drive to and from one of my clients I pass through the sub-sea Oslofjord tunnel (Oslofjordtunnelen). Now what has driving got to do with screen scraping and RSS, you say?

Hang on, I’m getting there.

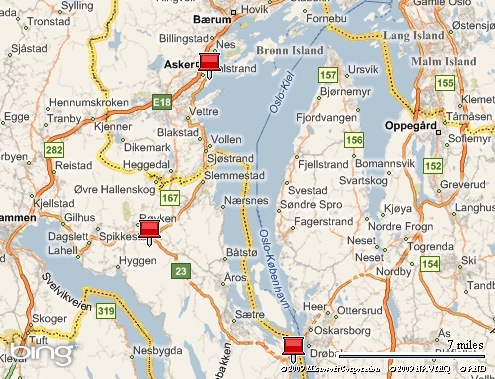

Below is a map extract that shows part of my route. The topmost pin is where I start out, the bottom-most pin is the Oslofjord tunnel. The pin in the middle is where, more often than not, a sign shows up stating that the tunnel is closed for maintenance. You can imagine my frustration when I’m forced to drive all the way back north to get around the Oslofjord!

To avoid that pain I set out to find a feed with traffic status updates and ended up at this page published by the Norwegian Public Roads Administration (NPRA). The page have regularly updated traffic information (all in Norwegian mind you) but to my frustration all as static web pages. No feed in sight!

At last here comes the screen scraping into play. You could write up your own scraper in any modern runtime these days. But being a good/lazy developer I know there are already quite good services out there that makes it a breeze setting up feeds with data scraped off of web pages. And lo and behold, I now burn a feed with the latest traffic updates!

So… basking in the glory of my genius for a couple of days I thought it a good idea to write up this blog post for the greater good of mankind. To make the story a bit shorter, what I discovered while rummaging around the NPRA site is that they indeed have great support for RSS!

Feeling a bit stupid I will now go and redirect my FeedBurner setup…and please let me know if there is a moral to this story.

Good night.